Generative AI is indian open place school sex videosexacerbating the problem of online child sexual abuse materials (CSAM), as watchdogs report a proliferation of deepfake content featuring real victims' imagery.

Published by the UK's Internet Watch Foundation (IWF), the report documents a significant increase in digitally altered or completely synthetic images featuring children in explicit scenarios, with one forum sharing 3,512 images and videos over a 30 day period. The majority were of young girls. Offenders were also documented sharing advice and even AI models fed by real images with each other.

"Without proper controls, generative AI tools provide a playground for online predators to realize their most perverse and sickening fantasies," wrote IWF CEO Susie Hargreaves OBE. "Even now, the IWF is starting to see more of this type of material being shared and sold on commercial child sexual abuse websites on the internet."

According to the snapshot study, there has been 17 percent increase in online AI-altered CSAM since the fall of 2023, as well as a startling increase in materials showing extreme and explicit sex acts. Materials include adult pornography altered to show a child’s face, as well as existing child sexual abuse content digitally edited with another child's likeness on top.

"The report also underscores how fast the technology is improving in its ability to generate fully synthetic AI videos of CSAM," the IWF writes. "While these types of videos are not yet sophisticated enough to pass for real videos of child sexual abuse, analysts say this is the ‘worst’ that fully synthetic video will ever be. Advances in AI will soon render more lifelike videos in the same way that still images have become photo-realistic."

In a review of 12,000 new AI-generated imagesposted to a dark web forum over a one month period, 90 percent were realistic enough to be assessed under existing laws for real CSAM, according to IWF analysts.

Another UK watchdog report, published in the Guardian today,alleges that Apple is vastly underreporting the amount of child sexual abuse materials shared via its products, prompting concern over how the company will manage content made with generative AI. In it's investigation, the National Society for the Prevention of Cruelty to Children (NSPCC) compared official numbers published by Apple to numbers gathered through freedom of information requests.

While Apple made 267 worldwide reports of CSAM to the National Center for Missing and Exploited Children (NCMEC) in 2023, the NSPCC alleges that the company was implicated in 337 offenses of child abuse images in just England and Wales, alone — and those numbers were just for the period between April 2022 and March 2023.

Apple declined the Guardian'srequest for comment, pointing the publication to a previous company decision to not scan iCloud photo libraries for CSAM, in an effort to prioritize user security and privacy. Mashable reached out to Apple, as well, and will update this article if they respond.

Under U.S. law, U.S.-based tech companies are required to report cases of CSAM to the NCMEC. Google reported more than 1.47 million cases to the NCMEC in 2023. Facebook, in another example, removed 14.4 million pieces of content for child sexual exploitation between January and March of this year. Over the last five years, the company has also reported a significant decline in the number of posts reported for child nudity and abuse, but watchdogs remain wary.

Online child exploitation is notoriously hard to fight, with child predators frequently exploiting social media platforms, and their conduct loopholes, to continue engaging with minors online. Now with the added power of generative AI in the hands of bad actors, the battle is only intensifying.

Read more of Mashable's reporting on the effects of nonconsensual synthetic imagery:

What to do if someone makes a deepfake of you

Explicit deepfakes are traumatic. How to deal with the pain.

The consequences of making a nonconsensual deepfake

Victims of nonconsensual deepfakes arm themselves with copyright law to fight the content's spread

How to stop students from making explicit deepfakes of each other

If you have had intimate images shared without your consent, call the Cyber Civil Rights Initiative’s 24/7 hotline at 844-878-2274 for free, confidential support. The CCRI website also includes helpful informationas well as a list of international resources.

Topics Apple Artificial Intelligence Social Good

JACL Calls on MLB to Fully Address Anti

JACL Calls on MLB to Fully Address Anti

Former Incarcerees Invited to July 5 Flag

Former Incarcerees Invited to July 5 Flag

CAPAC Chair Commemorates Bruce Lee’s 80th Birthday

CAPAC Chair Commemorates Bruce Lee’s 80th Birthday

Yuen Named to Human Relations Commission

Yuen Named to Human Relations Commission

Manzanar Committee Mourns Loss of Congressman Dellums

Manzanar Committee Mourns Loss of Congressman Dellums

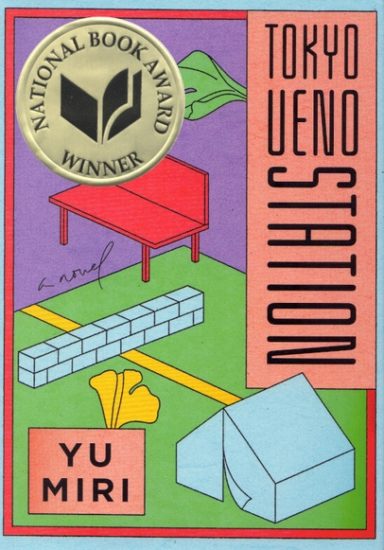

‘Tokyo Ueno Station’ Wins National Book Award

‘Tokyo Ueno Station’ Wins National Book Award

IT PAYS TO KNOW: Memorial Day

IT PAYS TO KNOW: Memorial Day

THROUGH THE FIRE: Radicalization of the Nisei

THROUGH THE FIRE: Radicalization of the Nisei

LTSC Hosts Community Building Seminar

LTSC Hosts Community Building Seminar

AADAP Announces Change in Leadership

AADAP Announces Change in Leadership

Gardena Buddhist Church Honors 2018 Graduates

Gardena Buddhist Church Honors 2018 Graduates

Message from S.F. Japantown United Against Hate

Message from S.F. Japantown United Against Hate

Kim, Steel Support Bipartisan Bill to Combat Anti

Kim, Steel Support Bipartisan Bill to Combat Anti

Japanese Heritage Night with the Giants

Japanese Heritage Night with the Giants

2018 Ashiya Student Ambassadors Arrive in Montebello

2018 Ashiya Student Ambassadors Arrive in Montebello

IT PAYS TO KNOW: Memorial Day

IT PAYS TO KNOW: Memorial Day

JANM Partners With Japanese Consulate to Present ‘A Taste of Home’ Public Programs

JANM Partners With Japanese Consulate to Present ‘A Taste of Home’ Public Programs

Live Broadcast to Mark Go For Broke Monument Anniversary

Live Broadcast to Mark Go For Broke Monument Anniversary

Eng in the Running for State Senate Seat

Eng in the Running for State Senate Seat

Passion for Teaching Martial Arts Leads to New Gym

Passion for Teaching Martial Arts Leads to New Gym

Wordle today: The answer and hints for December 2KU vs. Mizzou basketball livestreams: Game time, streaming deals, and more'GTA 6' trailer dropped a day early. Here's the release date window.NYT's The Mini crossword answers for December 2How to watch Tennessee vs. Illinois basketball without cable: game time, streaming deals, and moreFeast your eyes on these 'Wonka'Best Solawave deal: Get a skincare wand bundle for $52 offHow to watch OSU vs. PSU basketball without cable: game time, streaming deals, and moreWisconsin vs. U of A basketball livestreams: Game time, streaming deals, and moreWordle today: The answer and hints for December 2 Look out Substack, Ghost will join the fediverse this year Tesla Model 3 Performance is here. Here are 5 things that make it great, and 3 drawbacks. X's new video app is coming to your smart TV 'Baby Reindeer' has seen a wave of armchair detectives. The creator called a halt. iPad Air 2024: It’s not even out yet, but there’s already a case for it on Amazon Best AirPods deal: Get a pair of refurbished Apple AirPods Pro (2nd Gen) for $100 off at Best Buy Lazio vs. Hellas Verona 2024 livestream: Watch live Serie A football for free Best Chromebook deal: Get the Samsung Galaxy Chromebook 2 for $349 at Best Buy Best headphones deal: $100 off Bose QuietComfort Save $50 on the Fitbit Versa 4 Smartwatch

0.1467s , 14356.90625 kb

Copyright © 2025 Powered by 【indian open place school sex videos】Enter to watch online.AI child sex abuse material is proliferating on the dark web. Big Tech,Global Perspective Monitoring