In the days after the election,videos of women paid for sex apoplectic progressive journalists spent their time writing boiling hot takes, trying to find the one CNN chyron or Nate Silver tweet responsible for handing democracy over to a Putin-loving creamsicle. And while no one could ever agree (or admit that they agree) on the real enemy, nearly everyone pointed a finger at the new guy in town: fake news.

Almost instantly, Chrome extensions appeared that made it easier for users to identify fake news. Last week, Facebook even rolled out some far-too-cautious tools to help stop the onslaught. Yet for all of their efficacy, none of these tools will be able to fully curtail the plague of propaganda.

But every tech solution rolled out so far lacks the crucial ingredient necessary to make them work: human contact.

Ugh.

It's impossible to overstate the role fake news -- or propaganda, as seems increasingly likely -- had in this election. A Buzzfeedpost-election analysis found that fake news stories significantly outperformed real news stories in the final three months leading to the election. The top 20 best-performing sites generated 8,711,000 shares, likes and reactions, compared to just 7.3 million from reputable news sources.

And while both liberals and conservatives shared fake news, Trump supporters, it seems, were particularly susceptible to it: 38% of fake news shared came from the conservative sites, compared to just 20% from liberal sites.

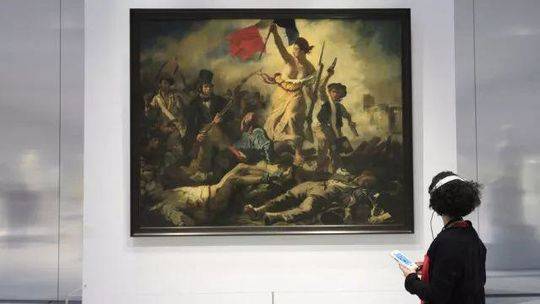

Original image has been replaced. Credit: Mashable

Original image has been replaced. Credit: Mashable Zuckerberg initially responded to criticism with outright denial, calling the idea that fake news and Facebook influenced the election "pretty crazy." So concerned journalists and software developers stepped in where the platforms didn't. Fake News Alert and B.S. Detector, developed shortly after the election, are both Google Chrome extensions that alert users when the site they're visiting is highly biased, simply clickbait or pure propaganda. Slatealso released a Google Chrome extension simply called This is Fake, which helps users identify and report fake news on Facebook.

Original image has been replaced. Credit: Mashable

Original image has been replaced. Credit: Mashable Facebook itself released their own set of tools last week that make it easier for users to flag fake news, hopefully making it harder for these stories to spread. Content determined to be false by their bipartisan fact-checking partner Poynter will come with a warning label as well as an explanation. Facebook has also said they'll prevent these stories from being advertised. Let's hope.

As powerful as each of these tools may be, none of them will likely go far enough to stop the torrent of fake news -- though they may temper it -- because they all fail to realize "the human element," and subsequently rely on two false premises:

1. The idea that people of different political persuasions are still talking to each other on social media, and therefore capable of spotting and reporting fake news.

2. The belief that people -- the same people who renamed the CNN "The Clinton News Network" and screamed "Lugenpresse," an old Nazi term, at the press -- will not project the same hostility towards Facebook, soften their criticism of The Washington Post or download a Chrome extension from ultra-progressive Slate. (Breitbart is already railing against Facebook's anti-fake news initiatives.)

This Facebook trending story is 100% made up.

— Ben Collins (@oneunderscore__) November 14, 2016

Nothing in it is true.

This post of it alone has 10k shares in the last six hours. pic.twitter.com/UpgNtMo3xZ

In order for Facebook's new tools to properly work, for example, users must first identify suspicious-looking content. But all readers, conservative and progressive alike, are inherently biased towards content that reflects their pre-existing political beliefs and values.

Facebook's algorithms fill your News Feed with familiar faces, who are more likely to share stories you like, limiting information diversity and creating echo chambers. In the months surrounding the election, Facebook users unfollowed and sometimes purged users from their feed whose political views didn't align with their own.

Original image has been replaced. Credit: Mashable

Original image has been replaced. Credit: Mashable So it's strange to imagine why most Facebook users would even be confronted with content they didn't like (and doesn't appeal to their political values) in the first place. If the story fits, people tend to wear it. It's remains to be seen if liberals or conservatives -- who both exist in social media echo chambers -- will be able to identify fake news stories that nonetheless appeal to their political values, and still be able to report it.

Why would users report stories that look potentially dubious (a skill, researchers found, many readers just don't have) if those stories neatly correspond with their ways of seeing the world?

There's also the inherent danger -- though perhaps an unavoidable one -- that the Infowars, AddictingInfo and Breitbartreaders of this world will soon come to distrust Facebook's fact-checking services. Why would the people who regularly read Alex Jones -- who claimed that Hillary was in league with the devil -- or who believe that Comet Ping Pong Pizzeria was home to a child sex ring led by John Podesta suddenly trust Facebook's judgement on The Washington Post? Facebook has been accused of being liberal before, so it's unclear whether voters paranoid about the mainstream media won't just become even more suspicious of the platform.

Obviously, there are more tools than Facebook's measures that people can use to call out fake news. But even those mechanisms are partisan, and the people who use them, self-selected. Breitbartfans aren't exactly going to go to progressive Slate and download their hottest fact-checking tool. People who spent the months preceding the election actively sharing "Denzel Washington Backs Trump In the Most Epic Way Possible" probably aren't going to read that Mashablearticle listing the smartest new chrome extensions for spotting fake news. Those who live and die by The Daily Calleraren't suddenly going to find room in the hearts for little ol' bipartisan Snopes.

In our (almost) post-fact world, we've come dangerously close to post-fact-checking. And that -- perhaps more than anything else that happened this past month -- should scare you.

None of this is to say that these tools won't be effective, or aren't deeply important. Not every Facebook user or Trump supporter is an Infowarsreader, and there were surely be many users who will trust Facebook's judgement and subsequently learn how to become better, more critical consumers. (Facebook could also ban some of the more egregious fake news accounts in the first place, or take for more aggressive measures to stop them).

Propaganda works, and as we've seen this election, can do lasting, potentially lethal damage.

Original image has been replaced. Credit: Mashable

Original image has been replaced. Credit: Mashable But if voters, particularly progressive voters, are serious about spotting and stopping fake news, they're going to need to commit a truly painful act -- and actually communicate with the people who believe these stories.

The main reason people believe in fake news, researchers found, is simple: because they want to.

The main reason people believe in fake news is simple: because they want to.

Stefan Pfattheicher, a professor at Ulm University, recently told The Washington Postthat people believe in fake news not due to a lack of intelligence, but a lack of will.

"This seems to be more a matter of motivationto process information (or news) in a critical, reflective thinking style than the ability to do so," Pfattheicher said.

If "critical readers" have any hope of stopping the fake news explosion, they'll need to do more than rely on external fact-checking tools or New York Times hyperlinks. They'll need to keep people in their Facebook feeds who they disagree with, and try to have radically empathetic, compassionate conversations with them off of the Internet.

The goal shouldn't end at halting the speed of a news story about "How all Muslims are terrorists" but at curbing people's desire to share that story, or believe in that hate, in the first place. And that means talking to people who disagree with you, people whose politics and values violently clash with your own.

Who wants to do that? No one, of course. (Raises hand.) It's awful, frequently traumatizing and, for anyone who's ever confronted an egg avatar on Twitter, often a waste of time.

Real change happens, however, when core beliefs change too. Since the election, organizers have shared tools for people to use to try and "convince" other people that their world views are distorted, even dangerous. Key to the design of every one of these tools is moving beyond factsand into the realm of the personal. For many, facts have become too partisan. Emotion, first-person stories and relationship-building often change more hearts and minds than a Politifact link or Facebook debunk.

Fake news is here to stay, and platforms, software developers and people will need to imagine even more aggressive ways to kill it. Tools will help. So will Chrome extensions. But the only real way to help people to believe in facts again is to magically, somehow, go beyond them and have a conversation.

Previous:115 Years of Fugetsu

Next:Curry Speaks Out

DASH Downtown Route Expands to Arts District

DASH Downtown Route Expands to Arts District

Dev Patel dazzles in David Lowery's captivating 'The Green Knight'

Dev Patel dazzles in David Lowery's captivating 'The Green Knight'

How to change your home address on Google Maps

How to change your home address on Google Maps

Kamala's lab mates on ’Never Have I Ever’ don't do justice to nerd culture

Kamala's lab mates on ’Never Have I Ever’ don't do justice to nerd culture

Chen Withdraws from 39th District Congressional Race

Chen Withdraws from 39th District Congressional Race

Twitter begins testing Reddit

Twitter begins testing Reddit

Snapchat profiles will feature 3D full

Snapchat profiles will feature 3D full

The original songs in 'Schmigadoon!' perfectly capture the joy of musicals

The original songs in 'Schmigadoon!' perfectly capture the joy of musicals

Talk on Connecting with Relatives in Japan

Talk on Connecting with Relatives in Japan

Akamai outage breaks the internet

Akamai outage breaks the internet

Memorial Day Services Set for Evergreen Cemetery

Memorial Day Services Set for Evergreen Cemetery

16 best tweets of the week, including horse jeans, 'Dune,' and Porpo Dorpo

16 best tweets of the week, including horse jeans, 'Dune,' and Porpo Dorpo

Samsung's upcoming Z Fold 3 and Z Flip 3 share some Galaxy Note DNA

Samsung's upcoming Z Fold 3 and Z Flip 3 share some Galaxy Note DNA

How to connect Alexa to a Bluetooth speaker

How to connect Alexa to a Bluetooth speaker

Vincent Okamoto Named Nisei Week Grand Marshal

Vincent Okamoto Named Nisei Week Grand Marshal

Facebook's response to Biden's COVID misinfo criticism is a big miss

Facebook's response to Biden's COVID misinfo criticism is a big miss

Instagram to increase privacy and security for young people on the app

Instagram to increase privacy and security for young people on the app

'Last Stop' game review: A perfect game for our golden era of peak TV

'Last Stop' game review: A perfect game for our golden era of peak TV

Keiro Awards $500k in Grants to 41 Nonprofit Organizations

Keiro Awards $500k in Grants to 41 Nonprofit Organizations

How to add music to an Instagram Story

How to add music to an Instagram Story

Northrop Grumman Names Cygnus Spacecraft After OnizukaThe Loss of a Little Tokyo IconOlympics carry a question: What does it mean to be Japanese?USJC Welcomes New Leaders to Board of CouncilorsCommunity Feedback Needed for Focus GroupSharing in an Olympic Dream FulfilledTokyo Olympics Cost $15.4 Billion. What Else Could That Buy?Los Angeles High School Stars Receive Milken Scholars AwardChamps at the White HouseOBITUARY: Wataru Namba, 94; Japanese American Survivor of Hiroshima Ring admits its employees tried to access customers' private video So Ivanka Trump's big idea for 'the future of work' is ... LinkedIn? Uber introduces 'favorite drivers' and new price displays for California users Sonos sues Google for allegedly stealing patented tech Glowing Facebook story pulled from Teen Vogue following serious WTFs Why Australia won't escape its vicious fire spiral 2020 winter theater preview: Armie Hammer, Bobby Cannavale, and more The Tesla of motorcycles probably doesn't have to worry about Tesla '90 Day Fiancé' is the best reality show on TV right now Uber launched secret project to target California drivers under new labor law

0.1502s , 14384.390625 kb

Copyright © 2025 Powered by 【videos of women paid for sex】Enter to watch online.Tech can help us spot fake news, but there's only one real way to stop it,Global Perspective Monitoring